A Weird Old Tip About EC2 Instances

2015-02-07 - By Robert Elder

Let's say that you launch two EC2 instances, one after another. They both have identical AMIs, memory, configurations etc. Next you run some benchmarks on them. Are the benchmarks going to have the same results? If you said yes, you're completely wrong in a big way, and so was I. Not knowing this fact could be costing you a lot of money!

It turns out that even for the same instance types, the underlying physical processor can be different, which is not entirely surprising since we all know that the smaller instance types on AWS are virtualized. What is surprising is that the differences in the physical processor leak through into the virtual machine in a very obvious way.

This can be easily demonstrated by launching your instances, and running

cat /proc/cpuinfo

For my test, I launched 2 identical m1.large instances. Each instance had a different processor, with a significantly different clock speed (although clock speed can be a poor comparison for performance).

model name : Intel(R) Xeon(R) CPU E5645 @ 2.40GHz

versus

model name : Intel(R) Xeon(R) CPU E5-2650 0 @ 2.00GHz

For each instance I ran a 22 small benchmarks using a program called 'sysbench', and the results were significant. Indicated below is time time taken in seconds to complete the benchmark. To start the test I ran

while true; do sysbench --test=cpu --cpu-max-prime=30000 run; done

in the command prompt, and the results were

| Intel(R) Xeon(R) CPU E5645 | Intel(R) Xeon(R) CPU E5-2650 |

|---|---|

|

|

As you can see, the variance in these numbers is very small, and a t-test gives a p value of p = 8.25083889757E-100 for these two data sets. The E5645 machine completes the task in about 75% of the time of the E5-2650 machine. This means that if you had been running a CPU bound task similar to the benchmark on the E5-2650 machine, you could have gotten 33% more work done for exactly the same amount of money by just terminating and re-requesting the instance!

For completeness sake, the commands I used to do the above benchmarks are listed below

# Make sure you have the AWS CLI installed

# Launch instance 1

aws ec2 run-instances --image-id ami-83dee0ea --count 1 --instance-type m1.large --key-name <key> --security-groups <security-group>

# Launch instance 2

aws ec2 run-instances --image-id ami-83dee0ea --count 1 --instance-type m1.large --key-name <key> --security-groups <security-group>

# Log into a server

ssh -i ~/.ssh/<your key file> ubuntu@<The public dns for each server>

sudo apt-get update

sudo apt-get install sysbench

while true; do sysbench --test=cpu --cpu-max-prime=30000 run; done

The way I found out about this originally was completely by accident. I was working on a project to build a distributed system that would split up some intense math calculations. The project used some fancy math software and python packages that needed to be compiled from source. When I tried to run the simulation, I would get a deadlock that I traced down to a couple of the instances where the process would die immediately after it started. What was stranger was that it would kill the process with 'illegal instruction' only on these machines and run fine on all the other ones.

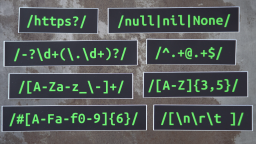

The problem turned out to be that the misbehaving instances used a different processor, and the hypervisor was incorrectly configured to present a flag to processes running in the VM that claimed AVX instructions (https://software.intel.com/en-us/blogs/2013/avx-512-instructions) were supported, even though the instructions were disabled on the processor by the hypervisor. This means that when programs on these instances tried to compile, they would produce bytecode that would attempt to use the optimized math instruction which had been disabled, resulting in the illegal instruction error whenever you try to run the program.

Amazon Cloud Servers For Beginners: Console VS Command-Line

Published 2017-03-20 |

Buy Now -> |

A Technical Review Of Adding Support For Google's AMP Pages

Published 2016-11-22 |

A Review of the react.js Framework

Published 2015-02-16 |

Learning Laravel and angular.js

Published 2015-02-06 |

D3 Visualization Framework

Published 2015-04-21 |

Silently Corrupting an Eclipse Workspace: The Ultimate Prank

Published 2017-03-23 |

XKCD's StackSort Implemented In A Vim Regex

Published 2016-03-17 |

| Join My Mailing List Privacy Policy |

Why Bother Subscribing?

|