Should I use Signed or Unsigned Ints In C? (Part 1)

2015-07-27 - By Robert Elder

To sign or not to sign, that is the question.

- Shakespeare

Updated August 15, 2015:

When I first wrote this article I didn't think many people would ever read it, but it turned out that it was submitted to 3 different subreddits and hackernews, generating 320 comments and 17, 986 unique page views over a few days. It looks like the majority of people don't agree with my conclusion, and you can read their comments at the links below. I've taken time to read over all the comments and I still prefer unsigned:

I also made a few corrections to this article based on comments:

- Clarified that you still can't declare an 'unsigned int' even in Java 8 (it works inside the 'int' type). (__konrad, wuch)

- Updated reference to Java specs on overflow behaviour. (BS_in_BS, wuch)

- More specific on constraints for shift example (cautious_int)

In addition, I have decided to create a part 2 that covers some aspects of signed versus unsigned integers that I didn't cover int the first article. I also justify why I still prefer unsigned.

Original Introduction

If you've ever asked yourself "Which is better, 'signed' or 'unsigned int's?" this article may help you decide for yourself. As you're probably already aware, questions about which commonly used alternative is better are usually answered with "Neither is universally better, they are both better in specific circumstances.". Despite this, it is interesting to consider the question "If you were stranded on a desert island with only signed or unsigned integers, which one would you pick?". This article will specifically look at the differences between signed and unsigned integers in the C programming language. If you're considering a language other than C (like Java) you may come to another conclusion.

Summary

To summarize my conclusion, I will claim that unsigned integers are better than signed integers for programming in C, but there are cases where you can't avoid using signed integers, such as return values from the standard library and practical computation involving concepts that must represent negative values (like temperatures). It is worth noting that my conclusion is in contradiction of Google's C++ coding standards, and I attempt to justify this below.

I started thinking about this question when I was working on my design for the One Page CPU (a very minimal CPU specification that can be printed on one page). I wanted to keep the instruction set small, so I decided that I would make the underlying CPU support either signed, or unsigned word operations, but not both. The reasoning being that you could simply emulate one in terms of the other (with some performance penalties).

As outlined below, my reasons for saying that unsigned integers are better than signed integers are mainly related to the well defined behaviour of unsigned integers, and the relatively undefined (or implementation defined) behaviour of signed integers in overflow conditions and in certain shift operations. If you're considering other languages, such as Java you might have a case for saying that 'signed' ints are better since they are more well defined in that language. In fact, prior to Java 8, you could not even represent 32 bit unsigned integers in an 'int'. In relation to signed int overflow, the Java the docs say "The integer operators do not indicate overflow or underflow in any way." and "The integral types are ... signed two's-complement integers" [1] "If an integer addition overflows, then the result is the low-order bits of the mathematical sum as represented in some sufficiently large two's-complement format. If overflow occurs, then the sign of the result is not the same as the sign of the mathematical sum of the two operand values."[1].

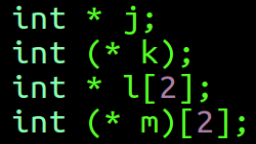

Let's start by looking at different the different ways of declaring ints in C:

int i; /* Signed */

signed int i; /* Signed, exactly the same meaning as the first one */

signed i; /* Signed, exactly the same meaning as the first and second one */

unsigned int i; /* Unsigned */

unsigned i; /* Unsigned, same meaning as the one above */

In the C standard, 'int' is defined to be a signed type. Note, however that this pattern doesn't generalize to all signable number types. char and 'signed char', are not necessarily the same. This fact is explicitly stated in the C standard (C99 Section 6.2.5.15), and the reasoning for this inconsistency is to allow backward compatibility to very old uses of the C language.

char c; /* Signed or unsigned? The standard says it could be either. */

signed char c;

unsigned char c;

Signed Overflow is Undefined

A good introduction to the undefined behaviour of signed integers is to observe that even the simplest of expressions can produce undefined behaviour:

int add(int a, int b){

return a + b; /* This is undefined if (a + b) > INT_MAX */

}

Signed overflow is not defined, and even though we could define it, compiler generally authors don't want it to be defined because the lack of definition allows for certain optimizations that can be common. An example of this is provided at http://www.airs.com/blog/archives/120 that involves a for loop which can be simplified to a closed form expression, but only if we assume that signed overflow does not exist in the program. There are also cases for saying that in certain situations the compiler could generate slower code for array indexing using unsigned integers than it would for signed integers. Since signed overflow is assumed to never happen, there is no need to check for it, but with unsigned integers you need to make sure that wrapping happens. In the end you always need to benchmark.

Unsigned Overflow is Well Defined

(C99, Section 6.2.5) "A computation involving unsigned operands can never overflow, because a result that cannot be represented by the resulting unsigned integer type is reduced modulo the number that is one greater than the largest value that can be represented by the resulting type."

The History

The reasoning for signed overflow not being defined is related to the fact that, in the past, it was important to be considerate of the different underlying bit representations for negative numbers. The development of C started in 1972 on the PDP-11 which was a two's complement machine. The PDP-1 was a one's complement machine that was still in production as late as 1969, and these machines were used well into the 1970's, so the idea of supporting one's complement was very much on people's radar back then. In fact, the UNIVAC 1100/2200 series is another example of a machine with one's complement data representation that appears to still be in active use today. The IBM 7090 is an example of a machine using sign-magnitude data representations. IBM 7090s controlled the Mercury and Gemini space flights and the Ballistic Missile Early Warning System until well into the 1980s[1]. Since engineering standards themselves are created to be consumed by disciplined engineering activities (like ballistic missile warning systems), it seems reasonable that the standards committee would give more weight to these applications than they would to civilian applications.

Since there is only one reasonable bit representation for positive integers, overflow conditions only have one sensible result. For signed integers, we have to be considerate of two's complement (used on nearly every computer today), one's complement (rarely used but shows up occasionally), and sign magnitude representation (mostly obsolete now). These representations are explicitly listed in ISO C (C99), section 6.2.6.2/2. Each of these bit representations have different rules for arithmetic for negative numbers, and this is why it wasn't possible to easily standardize behaviour on overflows. Both ones' complement and sign magnitude representations also include a concept of 'negative zero' which can lead to 'trap' values. GCC in particular has taken advantage of this undefined behaviour to create certain program optimizations (since it never needs to worry about someone doing signed overflow in a C program that is considered 'correct' for any given architecture). This dependency on signed overflow being undefined behaviour is one of the major reasons we still don't explicitly define the signed integer overflow, even though there is now generally only one reasonable result on today's two's complement machines.

Another possible benefit to allowing one integer type to have defined behaviour on overflow and one to have undefined behaviour, is that it would allow a conforming implementation to use a trap instruction (software triggered interrupt) for the undefined case. This would allow you to have unsigned integer operations overflow silently, but signed integer overflows could trigger an exception which causes a context switch into the kernel without violating the C standard. Architectures like MIPS and Alpha have special instructions that work as you expect in non-overflow cases, but cause traps in the event that the result has an overflow[1]. In practice these instructions are not typically used, but it could have benefits for important safety critical systems by allowing the kernel to do something exceptional in the event that a process encounters overflow. Maybe they should have done this on Cluster.

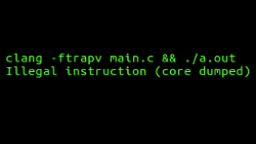

GCC claims to have support for a flag -ftrapv which allows you to trap on signed integer overflow, although it seems to be poorly supported.

Another interesting read on signed integer overflow and how it relates to GCC and optimization hazards in the Linux Kernel can be found here here

A very obscure complication of signed integer types comes from C99 section 6.2.6.2 Integer types. It states that there is a condition where signed integers can contain 'trap values' if the underlying architecture uses one's complement representation. Most modern computers don't (they use two's complement).

When considering conversions from signed to unsigned values, it is worth noting that:

unsigned int x = -1; /* This is well-defined with x having a resulting value of UINT_MAX */

is well defined, and gives a result of UINT_MAX. In this situation, we start the assignment by observing that the integer constant of '1' is within the range that can be represented by a signed integer. By following the decimal constant rules in C99 section 6.4.4.1 Integer constants, we observe that the integer constant '1' has type 'int' or more explicitly 'signed int'. If the integer constant '1' had been something like '4000000000' instead, it would not have fit into the range of an 'int' (on a machine with 32 bit ints), and the constant '4000000000' would have been given the type 'long int'. Next, our 'int' value of '1' is negated, giving us an 'int' value of -1. Now to consider the assignment: The C standard (section 6.3.1.3 Signed and unsigned integers) states that 'if the new type is unsigned, the value is converted by repeatedly adding or subtracting one more than the maximum value that can be represented in the new type until the value is in the range of the new type'. Following these rules, we would find that the resulting value of x is (-1 + (UINT_MAX + 1)) or UINT_MAX. Converting a signed integer to an unsigned integer is always defined, however converting an unsigned integer to a signed integer is implementation defined if the value can't be representation in the new type.

Another situation where signed integers produce not very well defined behaviour, is with shift operations. For right and left shift operations, it is important to be aware that shifts can be either logical or arithmetic. I'll direct you to the Wikipedia article on arithmetic shifts if you want a good explanation on the difference. Usually, right shifts on negative signed values are implemented as an arithmetic shift, however the C standard leaves this to be implementation defined.

Here is an excerpt from Section 6.5.7 Bitwise shift operators of the C99 standard:

"3 The integer promotions are performed on each of the operands. The type of the result is that of the promoted left operand. If the value of the right operand is negative or is greater than or equal to the width of the promoted left operand, the behavior is undefined.

4 The result of E1 << E2 is E1 left-shifted E2 bit positions; vacated bits are filled with zeros. If E1 has an unsigned type, the value of the result is E1 x 2^E2 , reduced modulo one more than the maximum value representable in the result type. If E1 has a signed type and nonnegative value, and E1 x 2^E2 is representable in the result type, then that is the resulting value; otherwise, the behavior is undefined.

5 The result of E1 >> E2 is E1 right-shifted E2 bit positions. If E1 has an unsigned type or if E1 has a signed type and a nonnegative value, the value of the result is the integral part of the quotient of E1 / 2^E2 . If E1 has a signed type and a negative value, the resulting value is implementation-defined."

/* Assume sizeof(int) == sizeof(unsigned int) == 4 */

/* and that UINT_MAX = 2^32 -1 */

int a = 1;

int b = -1;

a << b; /* Undefined because b is signed and negative (rule 3) */

int c = -1;

int d = 1;

c << d; /* Undefined because c is signed and negative (rule 4) */

int e = 1;

int f = 31;

e << f /* Undefined because 2147483648 can't be represented in a 32 bit signed int */

unsigned int g = 1;

unsigned int h = 31;

g << h /* Well defined because 2147483648 can be represented in a 32 bit unsigned int */

int i = 1;

int j = -1;

i >> j; /* Not defined. j is negative, rule 3. */

int k = -1;

int l = 1;

k >> l; /* Implementation defined. See rule 5. */

/* Left shift cases where both operands are promoted to unsigned

integers are well defined and equal to (E1 * 2^E2) mod UINT_MAX */

/* Right shift cases where both operands are promoted to unsigned

integers are well defined if E2 <= 31 */

/* Right shift cases where both operands are promoted to unsigned

integers are undefined if E2 > 31 */

/* Conclusions contingent on 32 bit assumption stated above */

As you can see, the behaviour of shifting unsigned integers is (almost) always defined, but the behaviour of shifting signed integers is a complex mixture of defined, implementation defined, and undefined behaviour. There are a few security implications related to this undefined behaviour for shifting operations.

For signed values, there is a difference between logical and arithmetic shift, and this can make things complicated because sign extension rules can be different if the underlying representation is not using two's complement.

If I do happen to convert you to using unsigned integers with this article, you should be aware of one possible gotcha that you might not think of at first if you just convert all your loops to use unsigned ints instead of ints. Here are some example loops using both signed and unsigned integers for the loop counter. Note that you have to be careful counting down with unsigned integers.

#include <stdio.h>

int main(void){

int i_s;

int size_s = SIZE;

unsigned int i_u;

unsigned int size_u = SIZE;

/* Looping up with signed: */

for(i_s = 0; i_s < size_s; i_s++){

printf("%i Signed Up.\n", i_s);

}

/* Looping down with signed: */

for(i_s = size_s -1; i_s > -1; i_s--){

printf("%i Signed Down.\n", i_s);

}

/* Looping up with unsigned int: */

for(i_u = 0; i_u < size_u; i_u++){

printf("%u Unsigned Up.\n", i_u);

}

/* Looping down with unsigned int (You have to be careful with this one): */

for(i_u = size_u; i_u-- > 0;){

printf("%u Unsigned Down.\n", i_u);

}/* If you don't pay attention to this case and do something like

for(i_u = size_u -1; i_u > -1; i_u--) you will get an infinite loop

because setting i to have the value (size -1) with size = 0 will give you UINT_MAX!

*/

}

So far this discussion has not considered cases where you need to represent quantities which can inherently be negative. An example would be things like representing temperatures around freezing point, or bank account debits/credits. An obvious way to do this with unsigned integers is to use an unsigned integer to represent your magnitude, and an extra 'flag' unsigned int to specify if the magnitude is positive or negative (with something like 0 for negative and 1 for positive).

struct safe_signed_int{

int magnitude;

int is_positive; /* 0 for magnitude representing negative, 1 for positive magnitude */

}

This wastes a lot of space, and it also makes any arithmetic operation you want to do unnecessarily complicated. In these cases it is certainly justifiable to use signed integers, but only as long as you can prove that they stay within the 'int' range that is provided with your target architecture. In general, this kind of proof isn't something the compiler can do at compile time, so you may be taking the chance of having undefined behaviour sneak into your program if you're not careful. If you do need to use signed integers you can find some overflow check helper functions. These were referenced on this bug report by one of the maintainers of GCC.

If you try to do the overflow check yourself, don't do this because it won't work when compiled with clang -O2 flag:

#include <stdio.h>

#include <limits.h>

int get_int_max(void){ return INT_MAX; } /* Fool static analysis with function indirection */

int main(void){

int a = get_int_max();

int b = 10;

/* Attempt to detect overflow by triggering it, and assuming two's complement wrapping */

if(!(a + b > a)){

printf("Detected overflow. This prints with clang -O0 main.c version 3.4-1ubuntu3.\n");

}else{

printf("Did not detect overflow. This prints with clang -O2 main.c version 3.4-1ubuntu3.\n");

}

}

It is worth nothing that Google's style guide for C++ programming explicitly discourages the use of unsigned integers[1]. This is stated quite clearly as 'Don't use an unsigned type' in the section 'On Unsigned Integers'. Their reasoning states that unsigned integers are 'intended as a form of self-documentation' for documenting that the value cannot be negative. Overall their argument for avoiding unsigned integers and sample code seems surprisingly weak for a company of the caliber of Google. The most important reason to use unsigned instead of signed is not self-documentation, it is to avoid undefined behaviour.

A final argument you could make against using unsigned integers is that you're eventually going need to interact with the C standard library. This is a problem if you're trying to avoid unsigned values, because the C standard library uses signed ints for return values nearly everywhere! Fortunately, the C standard library does use size_t for quantities that cannot be zero. Under the hood size_t is typedef'ed to be an unsigned integer type. Some people prefer to use size_t everywhere instead of unsigned int, because size_t is defined to be the return type of the 'sizeof' operator. A consequence of this is that the size_t type should be compatible as an array index for any array, whereas an unsigned int might not be.

Conclusion

In summary: Try to use unsigned integers whenever possible because signed integers have many more corner cases involving undefined behaviour; be careful of the gotcha with counting down in for loops using unsigned integer counters; the C standard library uses signed ints for return values, so you have to use them (or convert them) there; Some quantities are inherently negative and if you know that they'll never overflow then signed types are safe to use; size_t is defined to be an unsigned type, and it can have advantages over plain 'unsigned int'; Google's C++ style guide discourages using unsigned integers, but I'm going to contradict them.

Since this article was so popular, I've created a part 2.

If you found this interesting, you might want to also check out my C compiler.

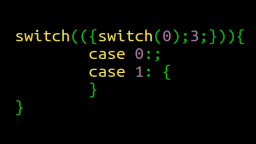

How to Get Fired Using Switch Statements & Statement Expressions

Published 2016-10-27 |

Buy Now -> |

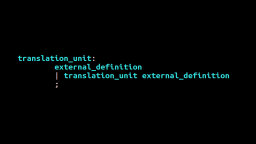

The Jim Roskind C and C++ Grammars

Published 2018-02-15 |

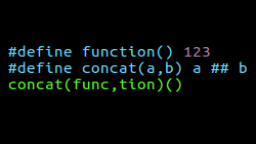

7 Scandalous Weird Old Things About The C Preprocessor

Published 2015-09-20 |

GCC's Signed Overflow Trapping With -ftrapv Silently Doesn't Work

Published 2016-05-25 |

Should I use Signed or Unsigned Ints In C? (Part 2)

Published 2015-08-16 |

Strange Corners of C

Published 2015-05-25 |

Building A C Compiler Type System - Part 1: The Formidable Declarator

Published 2016-07-07 |

| Join My Mailing List Privacy Policy |

Why Bother Subscribing?

|