Introducing The Robert Elder Software Linux Operating System

2016-09-27 - By Robert Elder

Dec 12, 2019 Update: This piece of software is no longer available as I have turned it off due to lack of interest. The article below used to contain a number of links which I have removed. The content below remains only for archival purposes.

Introduction

I've decided to create my own Linux® distribution to support a product for people who want to learn C programming on Linux.

How To Try It Out

You can find the install instructions here.

Let's review some of the people and organizations who made it possible for someone like me to create my own distro:

The Linux From Scratch Project

It's safe to say that without a resource like the Linux From Scratch project, I never would have attempted building a distro from scratch. It's one thing to know about kernel development, cross-compilers, and all the nuts and bolts of building an operating system. It's another thing entirely to integrate it all together to build a system with so many legacy quirks and rough edges. This is exactly what the LFS project does and it does a fantastic job of it.

In an abstract way, I now think of the LFS project as that essential piece needed to bootstrap a fundamental system like an iron forge, or farm. It's easy to make an iron forge with iron tools, but what do you do when you're building the first one? Similarly, you'll have a hard time growing a field of corn if you don't know where to find any seeds. The LFS project provides a working proof of concept for how to bootstrap a system from scratch for anyone who needs to, or wants to understand how the bootstrapping process works.

To put it a less abstract way: The LFS project provides you with a collection of packages (with verifiable md5sums) and corresponding instructions on exactly what to do to install these packages. With some exceptions, you can almost always assume that the instructions will work since they were originally tested on the exact packages that are made available. Following through the LFS project is not difficult, but it is tedious. One word of warning though: Do not miss a step or make a mistake. If you do, you will have a very bad time, and you will also learn a lot.

Most of the value that the LFS project provides is that it shields you from needing to figure out the idiosyncrasies of the approximately 230+ individual packages required to build a useful system with an X server and a desktop manager. Sure, most of them just require ./configure && make && make install, but someone has to go through the process of figuring out what package versions will play nicely with each other, and how to flatten out the dependency graph. And you can't forget about those quirky fixes that are required because a certain package maintainer has their own unique non-standard way of doing things. There are also some tricky parts at the very beginning related to making sure that the rest of your software is associated with the correct version of glibc, binutils and the Linux kernel headers.

In going through LFS, I think I only hit a few snags. One was where I missed a package install, so every install after would just end up failing in a way I didn't recognize at first. I also had some weird hard to debug issues when I missed an X input library. The craziest problems I encountered were actually because my RAM started failing during the install in a way that incorrectly set a certain bit high. This problem manifested itself while compiling GCC with GCC and I spent a good 3 hours trying to figure out why I was getting such strange compiler errors when I eventually thought "Maybe I should run memtest?".

One final awesome thing that LFS does is provide build and test logs. This is extremely helpful, because many of the packages have test cases that are expected to fail, and various other nasty looking error messages that aren't actually problems. If you see something that looks bad, you can just check if it appears in the reference log, and if it does you're probably fine. If it doesn't, you probably need to go back and find out where your logs start to diverge.

Having also tried out Arch Linux, my opinion is that it's actually easier (but more time consuming) to get a working system set up with LFS than with Arch. Also note that this comparison is based on the experience of doing this process in a virtual machine where an unbootable system can simply be rolled back. Since Arch emphasizes a rolling release system for it's packages, there is no guarantee that packages will play nicely together. LFS on the other hand has entire version set of all packages together with md5sums to verify them. I recall trying to set up a desktop manager with Arch, and during the install of GTK, the compile step failed with a missing external symbol. After investigating this online I found some posts of devs discussing the problem and their conversation went something like this: "Dev1: Won't this break the build for everyone? Dev2: Oh, don't worry this will only affect people on the bleeding edge like Arch users. Dev1: Oh, ok, I guess it's not a problem then. Dev2: Conversation completed successfully.". If you were to work through the process of fixing this yourself you'd just have to experiment with previous package versions and hope to discover a combination of versions that works well together (or find a patch, or modify the source yourself).

The GNU Ecosystem

A wise man once said "the version of GNU which is widely used today is often called “Linux”, and many of its users are not aware that it is basically the GNU system" Going through the manual process of installing each and every one of the building blocks that I use every day really made me appreciate how much work the GNU project really has done. Pretty much every one of the basic portable 'Linux' commands is really something implemented by a GNU package: find, make, diff, ls, cd, rm, touch, mv, chmod, cat, ... Of course many of these programs have alternative implementations (they are specified by the POSIX standard), but the GNU implementations seem to be the standard among most major distributions.

The GPL license is also worth talking about. It's an important part of the GNU ecosystem, and even if you don't use the GPL license for your open source projects, I think that you still benefit from the very existence of the GPL license. The GPL license came along at a fairly early time in software history, and since its advocates held such a highly refined vision on how software should be shared, it forced people (lawyers) to pay attention to a novel category of licenses. The zealousness of this license helped it define the 'spectrum' of free/open/proprietary software, where there otherwise might have just been a 'cluster' of licenses. Of course, it's impossible to know what would happen in an alternate course of history, but if the GPL had not existed I would not be surprised if the enforceability of less descriptive licenses such as the MIT licenses would have been eroded by case law. A license could be written with the exact same words, but it's the generally accepted interpretation of those words and the case law surrounding them that matters.

The X Libraries

There are a lot of X libraries. Not only are there a lot of them, but many have a lot of dependencies! Simply going through the process of installing them is a formidable task. I have difficulty imagining how much work it would be to actually write all of them.

The Linux Kernel

Last but not least, you can't forget the actual Linux kernel. The scale at which the kernel development now takes place is truly breathtaking. Here is a 2015 article describing some statistics on kernel contributions.

It's interesting to consider why Linux is so popular, and I think one of the main reasons for this is a sincere desire to make it open and collaborative. It's one thing to tell people that you're open and collaborative, but I tend to place more weight on behaviour. As an example of this, on 2008-02-24 the Linux Mark Institute stated "LMI has restructured its sublicensing program. Our new sublicense agreement is: Free — approved sublicense holders pay no fees". The usual change that we see is to go from free to paid, but making something free when your brand power would allow you to charge whatever you want is a good sign.

Why Build a New Distro?

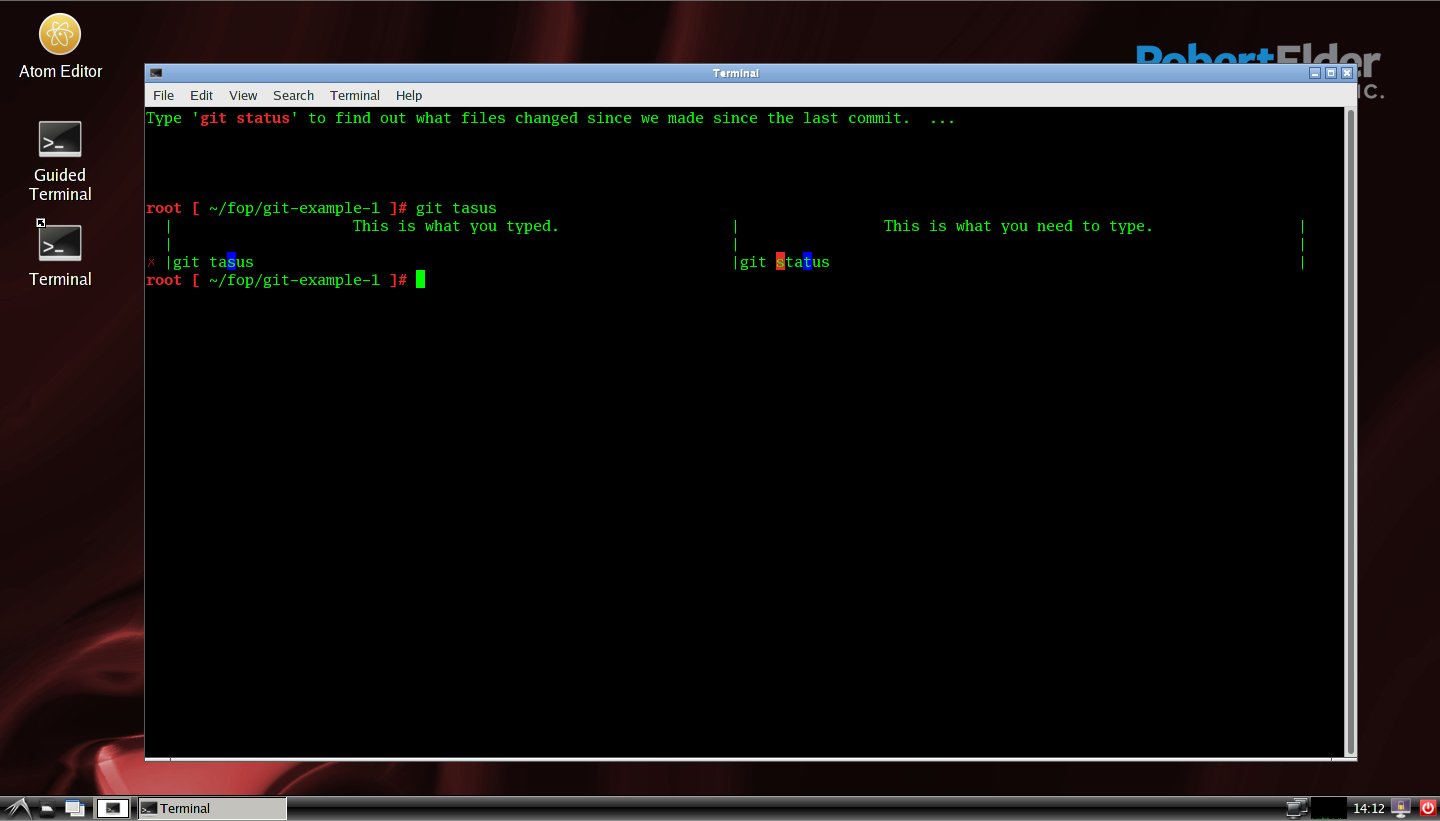

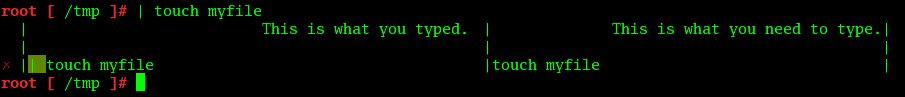

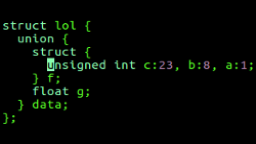

A worth-while question to ask is why it would be necessary to build a new distribution when there are already so many others out there? The reason is because I felt that in order to deliver the best experience I would need to be able to control every aspect of the software stack. As an example, in order to teach new users about how to type basic bash shell commands, they need better feedback than what bash currently offers. I experimented with several designs that used some hacky combinations of the DEBUG hook in bash, and a funky PS1 variable, but in the end I threw them out and simply patched bash itself. The result is that now I can catch anything a user types into bash and send this to a hook that decides whether it should run or not. This isn't possible using the DEBUG trap, since it won't let you trap syntax errors. Here is an example:

In the above example, if a user accidentally types '|' before their command into an unpatched bash session with the DEBUG trap enabled, bash will complain about a syntax error and never forward what was typed onto the DEBUG trap. In the process of implementing this patch it became clear that the DEBUG trap was never intended to be used the way that I was trying to use it.

It's also worth mentioning that my patch probably breaks a few things. I'm pretty sure there are now bug cases with multi-line inputs, and I also had to disable history expansion, but it works for what is needed right now.

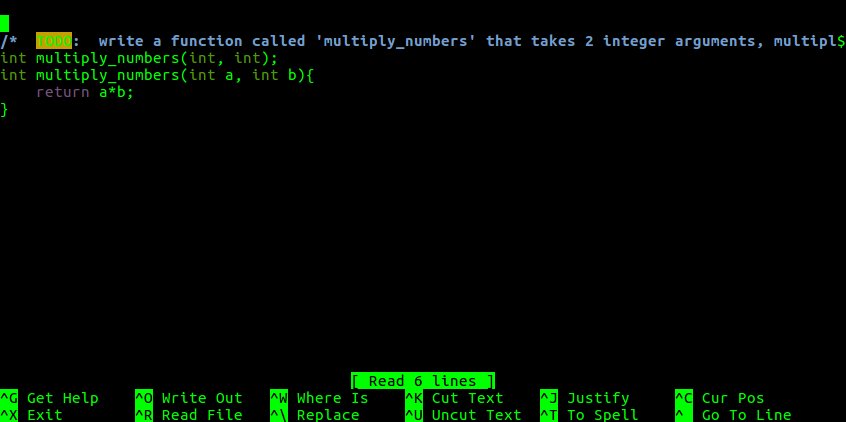

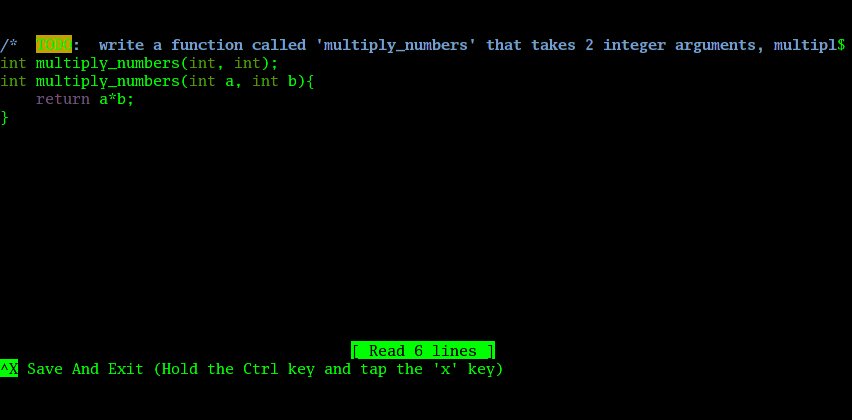

I made a few other simple (fairly sloppy) changes to the nano editor too. The default presentation of nano is much too complicated for beginner programmers with the huge list of cryptic shortcuts at the bottom. All they need is the simple ability to edit an existing file, then save and quit. No fancy productivity hotkeys, just change the file then save an exit. New users also don't know what ^X means, so I made it more descriptive. I also removed the options to specify a new file name and the prompt that asks you if you want to modify the previous file, so Ctrl X instantly saves and exists. Definitely not groundbreaking, but it makes all the difference to a new user:

Unpatched Nano |

Patched Nano |

The Learning Material

I talk more about this in the post about learning Linux commands and introductory C programming.

The general idea is just to make it as easy as possible for someone to jump into command line programming. At each stage, there is something that tells you what you need to do, or what command you need to type. If you do it wrong, it shows you the difference between the wrong thing you did and the correct thing that is expected. In addition, there is an activity feed that shows relevant search terms you can use to figure out what you are doing as you go.

Currently, there isn't much content, but I plan on adding more as people request it.

Intended for Virtual Use Only

I plan to only distribute this OS as a pre-installed .ova virtual machine appliance. Normally, you start out with an install iso, but one of the main points of creating a new distro was so that I can pre-install everything and remove the barrier to entry for students who don't understand how to install Linux themselves. This way, you just download the .ova file, download virtualbox, and you're up in running in a few clicks. You might think that installing something like Ubuntu would be easy for beginners, but it is most definitely not.

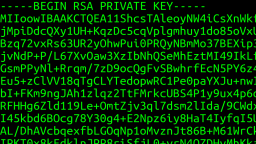

Security

A few of you out there are probably interested in what has been done to make sure that this OS is secure. The answer is 'not much'. In terms of evaluating the included packages, my strategy was simply to md5sum all packages, then regex replace the hashes to Google search queries. If there was a hash that didn't have any references on some form of trusted distro's package management pages, then I would investigate to figure out why. A rigorous evaluation of every package at this point is completely out of the question. I also rely on trusting the LFS people to have done some due diligence as well.

Another aspect of security is staying secure during normal use. I have intentionally not installed a web browser, because I won't be able to do the necessary evaluations to make sure that it is secure enough to use. I would generally discourage anyone from using this OS for any network activity, other than the activity feed information which is sent in response to working through the learning material. Currently, this distro comes with no package manager, so updating things would need to be done manually.

Known Bugs

I'm pretty sure there are a lot of things wrong with this distro, but I'll work on what I can in the time available. Some known problems:

- Some of the course content is leaking processes when you close the terminal.

- OS is 32 bit x86 only. Requires host to be x86 compatible.

- Never been tested in VMware player (it might work, but I only had time to test virtualbox).

- Shutdown option is missing and logout just logs back in again.

- Likely networking issues for some configurations (due to missing kernel modules).

- Some instructions assume a US keyboard layout.

The Future

I have a lot of ideas for new things to add, but I'm going to wait for feedback before I spend too much time on them. I think there are a lot of opportunities for a distribution that emphasizes a user-friendly introduction to command line tools, and keeps in mind younger (and older) people who may not even be that familiar with any operating system.

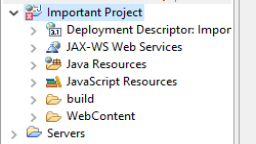

Silently Corrupting an Eclipse Workspace: The Ultimate Prank

Published 2017-03-23 |

Buy Now -> |

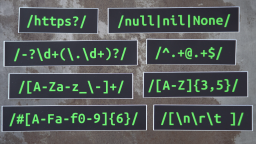

XKCD's StackSort Implemented In A Vim Regex

Published 2016-03-17 |

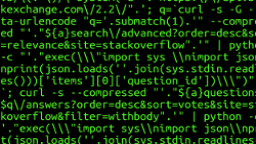

What is SSH? Linux Commands For Beginners

Published 2017-04-30 |

What is Git and Why Is It Useful?

Published 2016-12-15 |

Strange Corners of C

Published 2015-05-25 |

Virtual Memory With 256 Bytes of RAM - Interactive Demo

Published 2016-01-10 |

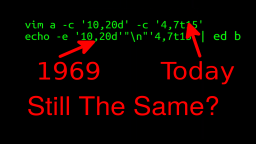

Can You Use 'ed' As A Drop-in Replacement For vim, grep & sed?

Published 2020-10-15 |

| Join My Mailing List Privacy Policy |

Why Bother Subscribing?

|